Global Site

Displaying present location in the site.

Vol.17 No.2 Special Issue on Revolutionizing Business Practices with Generative AI — Advancing the Societal Adoption of AI with the Support of Generative AI Technologies

Vol.17 No.2 (June 2024)

The widespread adoption of generative AI, powered by Large Language Models (LLMs), is expected to streamline everyday activities and enrich people’s lives. However, as businesses increasingly integrate these new technologies, it is crucial to accurately understand the various challenges that emerge from their adoption. To ensure the safe use of generative AI without compromising the potential of LLMs, it is also necessary to establish mechanisms for safe usage.

Over the past 30 years, NEC has been dedicated to the research and development of AI technologies, establishing itself as a leader in this domain. In 2023, NEC unveiled the largest AI supercomputer in Japan, setting a new benchmark for the industry. This achievement facilitated the creation of an innovative environment for the development of our own Large Language Model (LLM). Consequently, we introduced "cotomi," our generative AI technology, to external parties. Additionally, we have been proactive in devising and implementing safe usage protocols for LLMs, both within our organization and externally. Rapid establishment of internal guidelines has further accelerated the integration of generative AI into our daily business operations.

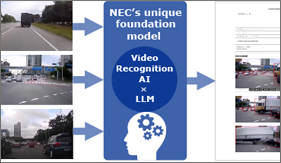

This special issue provides a comprehensive overview of NEC's generative AI technologies, showcasing a wide range of developments. It delves into the core technologies underpinning generative AI, illustrates advanced applications—such as the automation of video analytics tasks through the integration of generative AI with face and object recognition technologies—and discusses the establishment of rules and risk management methods that conform to global standards.

- ※The information posted such as the name of the department to which you belong is as of March 2024.

Special Issue on Revolutionizing Business Practices with Generative AI — Advancing the Societal Adoption of AI with the Support of Generative AI Technologies

Remarks for Special Issue on Revolutionizing Business Practices with Generative AI

NISHIHARA Motoo

Executive Officer, Corporate EVP, CTO,

and President of Global Innovation Business Unit

Approaches to Generative AI Technology: From Foundational Technologies to Application Development and Guideline Creation

SAKAI Junji

Senior Principal Engineer

Global Innovation Business Unit

Market Application of Rapidly Spreading Generative AI

NEC Innovation Day 2023: NEC’s Generative AI Initiatives

NEC Innovation Day 2023 report

On December 15, 2023, NEC held an event called NEC Innovation Day 2023 to present the latest information about its research and development to investors and media. This was the third ever NEC Innovation Day with the first one held in 2021. At this event, the executive officers NISHIHARA Motoo, Corporate EVP & CTO, and YOSHIZAKI Toshifumi, Corporate EVP & CDO, took the stage to give a presentation about generative AI and other NEC technologies. An organizational structure which seamlessly links R&D and business was highlighted with the title “Research and Development of Advanced Technologies and Creation of New Business to Drive NEC’s Next Growth.” This article will report on the presentation and five demonstrations incorporating a large language model (LLM) exhibited at the venue.

Streamlining Doctors’ Work by Assisting with Medical Recording and Documentation

TSUJIKAWA Masanori , KUBO Masahiro, KIHARA Takayuki , LINGANAIK Dhananjaya Bedkani

In this paper, we explain a technology that uses a large language model (LLM) to streamline doctors’ work by assisting with medical recording and medical documentation. For support in creating electronic medical records, speech of conversations between doctors and patients is recognized and then drafts of medical records are generated; for support in creating medical documents, a summary of the progress in treatment is created from medical records and then drafts of medical certificates are created for insurance claims, referral letters, etc. As a result of the improved efficiency, it is estimated in the model case that using LLM for support is expected to save 116 hours/doctor per year in the creation of medical records and 63 hours/doctor per year in the creation of medical documents. In the creation of medical documents, technological developments are further advanced, and a verification experiment has confirmed that the time spent on creating the documents can be halved. We will continue to develop technologies in preparation for the enforcement of work style reform for doctors in the revision of Japan’s Medical Care Act in April 2024 and aim to apply the technologies in other countries, such as India.

Using Video Recognition AI x LLM to Automate the Creation of Reports

LIU Jianquan, YAMAZAKI Satoshi, MIYANO Hiroyoshi

The rapid progress of large language models (LLMs) has generated great excitement for their use across various sectors such as transportation, finance, logistics, manufacturing, construction, retail, and healthcare. These sectors often handle complex types of data, including text, speech, images, and videos, creating a strong demand for LLMs capable of processing such varied inputs effectively. Currently, there is significant advancement in LLMs for still images, with a growing focus on applying this technology to videos, which is rich in valuable information. In this paper, we explore NEC’s latest advancements in using LLM for processing long videos and examine how this technology is used in industries to streamline tasks like creating reports. We also outline plans for future developments in this exciting domain.

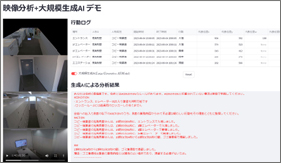

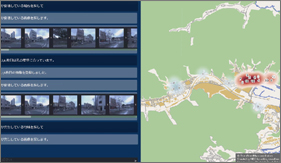

Understanding of Behaviors in Real World through Video Analysis and Generative AI

KANNA Yoshihiro , KAJIKI Yoshihiro

NEC believes that it is important to understand behaviors in the real world to achieve safety, security, fairness, and efficiency. However, it is difficult to understand complex or unexpected behaviors with conventional video analysis. Therefore, we propose the use of a technology that understands real-world behaviors and their context and that also predicts the intentions behind the behaviors as well as future actions by utilizing the latest generative AI. In this paper, we propose specific architectures that can achieve an understanding of real-world behaviors by using video analysis and generative AI, and we present the results of an experiment that demonstrated it is possible to understand suspected behaviors in office buildings with the proposed architecture.

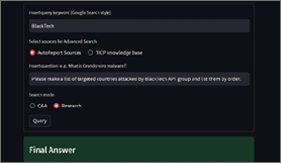

Automated Generation of Cyber Threat Intelligence

KAKUMARU Takahiro, TAKAHASHI Wataru, KATSUSE Riku, SIRACUSANO Giuseppe, SANVITO Davide, BIFULCO Roberto

In order to identify cybersecurity risks at an early stage, NEC’s intelligence analysts daily gather, accumulate, and analyze cybersecurity information. However, the scope of information to be gathered has expanded beyond cyberattacks, including information about political, economic, social, and technological trends. As a result, one of the challenges is how to appropriately narrow down sources of information and conduct an analysis while gathering information from a wider range of fields. NEC is working to automate the generation of cyber threat intelligence using generative AI for high-accuracy and rapid analyses. This paper presents NEC’s cyber threat intelligence initiatives and challenges as well as an extraction and summarization pipeline under development for cyber threat information and a search and analysis pipeline for cyber threat-related information.

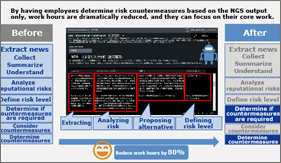

NEC Generative AI Service (NGS) Promoting Internal Use of Generative AI

KAWATO Katsushi

In May 2023, we launched the NEC Generative AI Service (NGS) with the aim of internal business use. For the large language model (LLM), we used not only GPT-3.5 from Microsoft’s Azure OpenAI Service but also GPT-4 and NEC’s LLM. We not only made mechanisms available but also established rules so employees could appropriately use generative AI and prepared policies for its use. In addition, to make full use of generative AI within the NEC Group and lead the way in achieving overwhelming improvements in productivity, we launched the NEC Generative AI Transformation Office and started to provide the MyData service from December 2023, aiming to improve the value of the NGS. At the end of this paper, we present a variety of utilization cases.

Utilization of Generative AI for Software and System Development

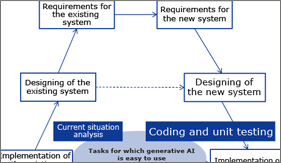

YANOO Kazuo

This paper provides an overview of how we can utilize generative AI in software and system development and introduces the initiatives of the NEC Group. Generative AI has great potential, but it currently has several technical challenges. Therefore, software and system development remains, for the present, a process that must be primarily performed by people. However, depending on the nature of the development project, generative AI can be used to streamline human work in a variety of tasks and to improve quality. The NEC Group plans to improve productivity and quality in software and system development by systematically using generative AI.

LLMs and MI Bring Innovation to Material Development Platforms

OBUCHI Kiichi, FUNAYA Koichi, TOYAMA Kiyohiko, TANAKA Shukichi, ONORO RUBIO Daniel, MIN, Martin Renqiang, MELVIN Iain

In this paper, we introduce efforts to apply large language models (LLMs) to the field of material development. NEC is advancing the development of a material development platform. By applying core technologies corresponding to two material development steps, namely investigation activities (Read paper/patent) and experimental planning (Design Experiment Plan), the platform organizes documents such as papers and reports as well as data such as experimental results and then presents in an interactive way to users. In addition, with techniques that reflect physical and chemical principles into machine learning models, AI can learn even with limited data and accurately predict material properties. Through this platform, we aim to achieve the seamless integration of materials informatics (MI) with a vast body of industry literature and knowledge, thereby bringing innovation to the material development process.

Disaster Damage Assessment Using LLMs and Image Analysis

TANI Masahiro, TERAO Makoto, SOGI Naoya, SHIBATA Takashi, SENZAKI Kenta, RODRIGUES Royston

Against the backdrop of the intensification of torrential rain disasters in recent years and concerns about the occurrence of a massive earthquake in the near future, there is a growing demand for further strengthening disaster control measures. In the event of a disaster, it is crucial to assess disaster damage quickly and accurately to minimize damage by expediting initial response activities such as evacuation guidance and rescue efforts. In this paper, we introduce a technology that utilizes a multitude of field images collected in the event of a disaster to assess the damage through the use of large language models (LLMs) and image analysis.

Fundamental Technologies that Enhance the Potential of Generative AI

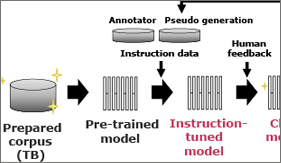

NEC's LLM with Superior Japanese Language Proficiency

OYAMADA Masafumi, AKIMOTO Kosuke, DONG Yuyang, YANO Taro, TAKEOKA Kunihiro, MAKIO Junta

NEC have developed our own LLM (Large Language Model) with superior Japanese language proficiency and accelerating its use for internal operations and business applications. Despite its compact design capable of operating on a single GPU, this model boasts world-class Japanese language proficiency, achieved through long-time training with large amounts of high-quality data, a robust architecture, and meticulous tuning of instructions. Furthermore, with the motto, “Usable in business,” we identified the elements necessary for LLMs in practical applications, such as high-speed inference and processing long texts of more than 200,000 characters. This paper provides an overview of the design philosophy, development process, and performance that we focused on strengthening.

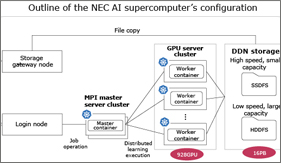

NEC’s AI Supercomputer: One of the Largest in Japan to Support Generative AI

In recent years, generative AI has dramatically evolved due to increases in the number of parameters and the volume of training data. Furthermore, the multimodalization of generative AI, which encompasses language, images, and audio, requires significantly increased computational power. Therefore, a system that efficiently and stably conducts large-scale distributed training using numerous GPUs is essential for developing foundation models for generative AI. This paper introduces the system architecture of NEC’s AI supercomputer that supports the training of generative AI and future initiatives.

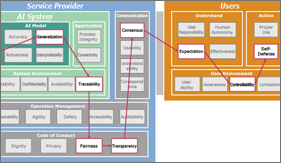

Towards Safer Large Language Models (LLMs)

LAWRENCE Carolin, BIFULCO Roberto, GASHTEOVSKI Kiril, HUNG Chia-Chien, BEN RIM Wiem, SHAKER Ammar, OYAMADA Masafumi, SADAMASA Kunihiko, ENOMOTO Masafumi, TAKEOKA Kunihiro

Large Language Models (LLMs) are revolutionizing our world. They have impressive textual capabilities that will fundamentally change how human users can interact with intelligent systems. Nonetheless, they also still have a series of limitations that are important to keep in mind when working with LLMs. We explore how these limitations can be addressed from two different angles. First, we look at options that are currently already available, which include (1) assessing the risk of a use case, (2) prompting a LLM to deliver explanations and (3) encasing LLMs in a human-centred system design. Second, we look at technologies that we are currently developing, which will be able to (1) more accurately assess the quality of an LLM for a high-risk domain, (2) explain the generated LLM output by linking to the input and (3) fact check the generated LLM output against external trustworthy sources.

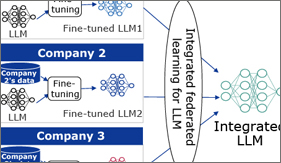

Federated Learning Technology that Enables Collaboration While Keeping Data Confidential and its Applicability to LLMs

The advancement of AI based on deep learning is remarkable, and large language models (LLMs) are even capable of natural interaction with humans. However, creating such AI technology requires a large amount of data, making data acquisition a crucial challenge. Federated learning is a technology that helps leverage highly confidential data scattered across multiple organizations, which is part of this challenge. In this paper, we explain three basic types (horizontal, vertical, and transfer) of federated learning and discuss the applicability of federated learning to generative AI, including LLMs, which have garnered significant attention in recent years.

Large Language Models (LLMs) Enable Few-Shot Clustering

VISWANATHAN Vijay, GASHTEOVSKI Kiril, LAWRENCE Carolin, WU Tongshuang, NEUBIG Graham

Unlike traditional unsupervised clustering, semi-supervised clustering allows users to provide meaningful structure to the data, which helps the clustering algorithm to match the user’s intent. Existing approaches to semi-supervised clustering require a significant amount of feedback from an expert to improve the clusters. In this paper, we ask whether a large language model (LLM) can amplify an expert’s guidance to enable query efficient, few-shot semi-supervised text clustering. We show that LLMs are surprisingly effective at improving clustering. We explore three stages where LLMs can be incorporated into clustering: before clustering (improving input features), during clustering (by providing constraints to the clusterer), and after clustering (using LLMs post-correction). We find incorporating LLMs in the first two stages routinely provides significant improvements in cluster quality, and that LLMs enable a user to make trade-offs between cost and accuracy to produce desired clusters.

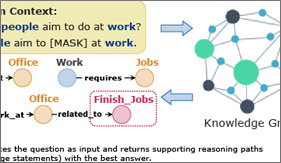

Knowledge-enhanced Prompt Learning for Open-domain Commonsense Reasoning

ZHAO Xujiang, LIU Yanchi, CHENG Wei, OISHI Mika, OSAKI Takao, MATSUDA Katsushi, CHEN Haifeng

Neural language models for commonsense reasoning often formulate the problem as a QA task and make predictions based on learned representations of language after fine-tuning. However, without providing any fine-tuning data and pre-defined answer candidates, can neural language models still answer commonsense reasoning questions only relying on external knowledge? In this work, we investigate a unique yet challenging problem-open-domain commonsense reasoning that aims to answer questions without providing any answer candidates and fine-tuning examples. A team comprising NECLA (NEC Laboratories America) and NEC Digital Business Platform Unit proposed method leverages neural language models to iteratively retrieve reasoning chains on the external knowledge base, which does not require task-specific supervision. The reasoning chains can help to identify the most precise answer to the commonsense question and its corresponding knowledge statements to justify the answer choice. This technology has proven its effectiveness in a diverse array of business domains.

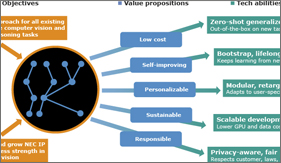

Foundational Vision-LLM for AI Linkage and Orchestration

KHAN Zaid, KUMAR B G Vijay, SCHULTER Samuel, CHANDRAKER Manmohan

We propose a vision-LLM framework for automating development and deployment of computer vision solutions for pre-defined or custom-defined tasks. A foundational layer is proposed with a code-LLM AI orchestrator self-trained with reinforcement learning to create Python code based on its understanding of a novel user-defined task, together with APIs, documentation and usage notes of existing task-specific AI models. Zero-shot abilities in specific domains are obtained through foundational vision-language models trained at a low compute expense leveraging existing computer vision models and datasets. An engine layer is proposed which comprises of several task-specific vision-language engines which can be compositionally utilized. An application-specific layer is proposed to improve performance in customer-specific scenarios, using novel LLM-guided data augmentation and question decomposition, besides standard fine-tuning tools. We demonstrate a range of applications including visual AI assistance, visual conversation, law enforcement, mobility, medical image reasoning and remote sensing.

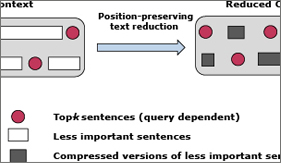

Optimizing LLM API usage costs with novel query-aware reduction of relevant enterprise data

AREFEEN Md Adnan, DEBNATH Biplob, CHAKRADHAR Srimat

Costs of LLM API usage rise rapidly when proprietary enterprise data is used as context for user queries to generate more accurate responses from LLMs. To reduce costs, we propose LeanContext, which generates query-aware, compact and AI model-friendly summaries of relevant enterprise data context. This is unlike traditional summarizers that produce query-unaware human-friendly summaries that are also not as compact. We first use retrieval augmented generation (RAG) to generate a query-aware enterprise data context, which includes key, query-relevant enterprise data. Then, we use reinforcement learning to further reduce the context while ensuring that a prompt consisting of the user query and the reduced context elicits an LLM response that is just as accurate as the LLM response to a prompt that uses the original enterprise data context. Our reduced context is not only query-dependent, but it is also variable-sized. Our experimental results demonstrate that LeanContext (a) reduces costs of LLM API usage by 37% to 68% (compared to RAG), while maintaining the accuracy of the LLM response, and (b) improves accuracy of responses by 26% to 38% when state-of-the-art summarizers reduce RAG context.

For AI Technology to Penetrate Society

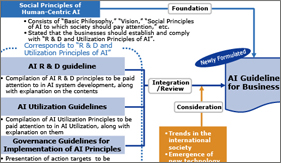

Movements in AI Standardization and Rule Making and NEC Initiatives

TABE Takashi, MOTONAGA Kazuhiro, SHIMAMURA Toshiya, NAGANUMA Miho, ORTOLAN Francois , FROST Lindsay

NEC has participated in efforts to develop standard specifications through organizations like the Institute of Electrical and Electronics Engineers (IEEE), the International Organization for Standardization/International Electrotechnical Commission (ISO/IEC), and European Telecommunications Standards Institute (ETSI) for not only the development of AI technologies but also for the social implementation of them. With the advent of generative AI, countries are moving toward stricter regulations regarding AI, and standards related to AI governance are needed. This paper describes policies in Europe, the United States, and Japan; trends in multilateral frameworks such as the G7 Hiroshima AI Process; as well as trends in the development of guidelines and the standardization in line with them. This paper also presents NEC’s relevant initiatives.

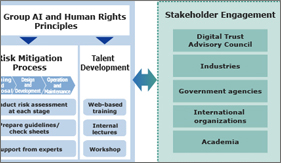

NEC's Initiatives on AI Governance toward Respecting Human Rights

MATSUBARA Akihiko , TOKUSHIMA Daisuke, SHIMAMURA Toshiya

NEC has formulated the “NEC Group AI and Human Rights Principles” to ensure that its business activities related to AI utilization respect human rights, preparing internal systems and rules as well as talent development and others for the implementation of AI governance. In addition, NEC strengthens its ability to respond to new challenges arising from AI utilization by running the Digital Trust Advisory Council, which is composed of a variety of external experts. In this paper, we will introduce NEC’s initiatives on AI governance in AI businesses, including biometric authentication.

Case Study of Human Resources Development for AI Risk Management Using RCModel

ITO Hirohiko, ITO Chihiro

As AI has become more widely used in recent years, AI governance to ensure proper use of AI has been drawing attention. Some regulations related to AI governance require appropriate risk management by people. Therefore, developing human resources who can be responsible for the risk management of AI services is expected to become an important issue. Since 2021, NEC has been conducting joint research with the University of Tokyo on how to develop AI-specialized human resources for AI risk management using the RCModel, a tool developed by the University of Tokyo. This paper will provide an overview of the human resource development program implemented in the joint research as well as the results and the achievements thereof and present the future prospects of the program.

PDF

PDF