Global Site

Displaying present location in the site.

AI-Based Fraud and Risk Detection Service Ensures Transparency While Improving Operational Efficiency and Performance

Artificial intelligence is having a growing influence on the way modern society functions. Consequently, it is becoming increasingly vital for more fairness, transparency, and accountability with respect to the decisions made by AI. With our Heterogeneous Mixture Learning technology, which is what we call explainable artificial intelligence, or XAI, NEC has succeeded in ensuring these three requirements. As an example of effective utilization of XAI, this paper outlines NEC’s AI-based fraud and risk detection service, which supports fraud risk analysis at financial institutions. We also describe the component technologies that form the basis for this service.

1. Introduction

The novel coronavirus (COVID-19) pandemic has introduced the rising risk of financial crimes. NEC already offers a selection of services―such as RiskTech* that exploit advanced AI and digital technologies to mitigate risk and enable financial institutions to handle their products safely and securely. Key to establishing a robust defense against financial crimes is to understand the reasoning behind any operations or measures implemented by AI against fraud risk at financial institutions. With this in mind, we have placed heavy emphasis in our R&D improving and verifying AI “explainability.”

In this paper, we will examine the latest developments in explainable AI (XAI), taking as an example of applied XAI, our own AI-based fraud and risk detection service.

- *RiskTech: Coinage that combines risk and technology—this solution helps customers to take proactive measures against the increasingly complex financial risks by visualizing risk-related issues and optimizing the response through utilization of AI and other cutting-edge technologies.

2. Trends in XAI

In this section, we review trends in the field of XAI both in Japan and around the world.

2.1 Trends in Japan

With advancing progress in AI technology and an increase in applicable data for different scenarios, utilization of AI to support decision-making in critical sectors such as healthcare and finance1) has increased significantly in recent years, making “explainability” even more important. Against this backdrop, Japan’s Ministry of Internal Affairs and Communications (MIC) announced the “AI Utilization Principles” within the “AI Utilization Guidelines”2) in 2019, emphasizing the need for AI users and service providers to properly understand the details of the guidelines and to undertake to follow them. Among the points emphasized in these Principles are fairness, transparency, and accountability. The Principles also make it clear that the reasons underlying any conclusion reached by AI need to be explainable to stakeholders.

The Japanese government is pushing for more explainability and social and financial factors are also making it a matter of greater importance to both users and providers of AI services. This indicates that demand for accountable AI systems will continue to grow in the future.

2.2 Overseas trends

Active discussions about the explainability of AI are now ongoing internationally as well. At meetings such as the Group of Seven (G7), the United Nations Educational, Scientific and Cultural Organization, and the Organisation for Economic Co-operation and Development (OECD), AI transparency and explainability has been a major topic3). The explainability of AI is also regarded as an important issue in various countries such as in Europe and North America, based on a similar understanding of the problem as in Japan. Details of these examinations have been published in various guidelines and reports put out by the organizations that establish AI utilization policies.

3. Fraud and Risk Detection Service Using XAI

Against this backdrop, NEC has redoubled its R&D efforts in this field, working hard to achieve AI systems that are fair, transparent, and accountable. In this section, we look at a specific use case―AI-Based Fraud and Risk Detection Service which is built on top of NEC’s RiskTech solution.

3.1 Overview of the AI-Based Fraud and Risk Detection Service

In addition to taking anti-money laundering (AML) measures, financial institutions are now required to closely monitor potential fraudulent financial schemes. These include phone fraud, illegal remittance using online banking, and illegal stock transactions. NEC’s AI-Based Fraud and Risk Detection Service uses AI to facilitate greater efficiency and sophistication of anti-fraud operations and is already in service at multiple financial institutions.

3.2 Operational issues solved by the AI-Based Fraud and Risk Detection Service

In fraud monitoring operations at financial institutions, suspicious customers and transactions are generally detected with dedicated package software and specifically built features that systematically extract fraud candidates. Many of these fraud detection systems use a rules-based detection method that extracts alerts based on certain conditions. For example, a typical AML transaction monitoring system applies various rules that have been generated based on past fraud cases―such as “amount paid: ≥n yen” and “number of deposits: ≥n times”―to extract transctions suspected to be money laundering. However, there is a disadvantage in such rules-based detection. When rules are set so as to prevent deteciton oversights, the result can be too many unnecessary alerts, or false positives. Reducing the workload of the fraud monitoring staff who must check these false positives is a major issue for financial institutions.

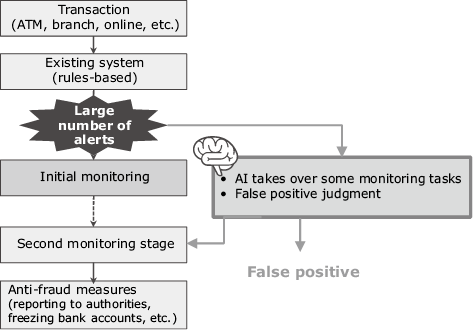

To increase efficiency and reliability, AI learns the results of previous judgments made by the fraud monitoring staff. Subsequently, the AI model automatically judges any alerts which have similar factors to false positives in the past―as false positives. This can reduce the number of alerts that need to be checked by humans. For example, when monitoring is conducted in two steps as shown in Fig. 1, it even is possible for AI to take over some aspects of the first monitoring stage; only those alerts not identifiable by AI need to be checked by humans.

3.3 Achieving the accountability essential for fraud monitoring operations

Fraud monitoring at financial institutions requires not only accurate assessments of suspicious transactions, but also understandable explanations of how those judgments were arrived. For example, in AML transaction monitoring, financial institutions are obligated to report transactions suspected to be money laundering to the authorities. They are required to report why they believe that the transactions are suspicious, however, they need to fully comprehend the basis for the AI’s judgment. In other words, “explainability” is indispensable. To make this possible, this system uses NEC’s Heterogeneous Mixture Learning technology―our original white-box AI―which provides a high level of explainability while maintaining maximum judgment accuracy.

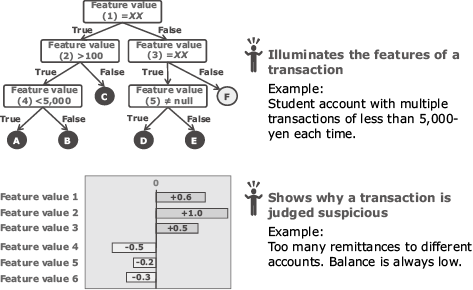

As shown in Fig. 2, the Heterogeneous Mixture Learning visualizes the data that shows the features of the transactions the AI has deemed suspicious, why the AI has judged they are suspicious, and so on. This provides the fraud monitoring staff with the information to examine the transaction more closely and to report the reasons for flagging the transaction to the authorities.

4. The Technologies That Make XAI Possible

In this section, we introduce the various technologies―including Heterogeneous Mixture Learning mentioned in Section 3.3―that have helped to achieve explainability with AI.

4.1 How explainability is achieved

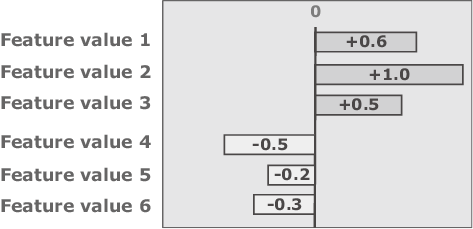

One of the ways to achieve explainability is to visualize the feature values on which the AI bases its judgment and to what degree each of those feature values affect the judgment results. The concept of visualization of feature values is shown in Fig. 3.

Visualization of feature values can be accomplished with simple machine learning algorithms such as logistic regression. Technologies to visualize feature values are also being developed for complex AI algorithms such as deep learning algorithms, including Local Interpretable Model-agnostic Explanations (LIME) and SHapley Additive exPlanations (SHAP). These methods visualize the degree of impact of each feature value in an arbitrary AI model by theorizing approximate local models around the data point that needs explanation. When LIME and SHAP are utilized, additional analysis of the judgment results of the AI models is required. The impact of this on actual operation needs to be taken into consideration when designing a system based on these methods.

4.2 Features of the Heterogeneous Mixture Learning technology

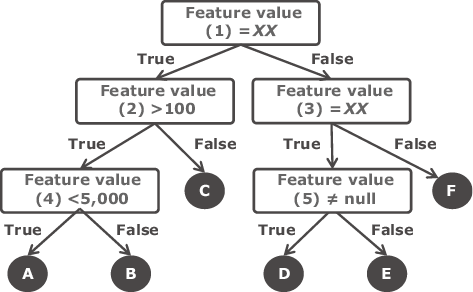

The Heterogeneous Mixture Learning technology automatically divides data into cases based on specific regularities derived from relationships between data interspersed in a wide variety of data. The rules that are referenced are switched depending on the data being analyzed. This facilitates extremely accurate predictions4). A flow chart showing the case dividing process used by Heterogeneous Mixture Learning is shown in Fig. 4.

In addition, Heterogeneous Mixture Learning provides excellent explainability. LIME and SHAP are methods that provide explainability locally to specific input data after an AI model is generated while Heterogeneous Mixture Learning provides explainability to the point where an AI model is generated. Specifically, besides visualizing feature values that affect the judgement of AI models, the function to visualize the regularities in case dividing mentioned above is integrated into each AI model from the beginning. As a result, this system can provide much more information than standard AI algorithms, thereby offering enhanced explainability.

5. Conclusion

In this paper we have discussed the importance of XAI to facilitating transparency and accountability in AI applications such as fraud monitoring and introduced the technologies that form the basis for such systems. Because AI systems are developed and operated differently from conventional IT systems, the importance in addressing the issues of fairness, transparency, and accountability in the utilization of these systems is a point that bears reiteration. Rules for AI governance are being formulated in countries around the world to ensure that AI is used safely and securely. In Japan, these efforts have led to the publication in 2019 of “AI Utilization Guidelines” by the MIC in 2019.

In addition to the Heterogeneous Mixture Learning introduced in this paper, NEC is engaged in a variety of R&D activities to achieve AI governance―including technology to protect AI models from new attacks that infer the original data―using NEC-original Secure Computing Technology and by analyzing AI models. While promoting efforts to strengthen AI governance and advancing the RiskTech services continuously in the future, we will maintain our commitment to realizing a safe and secure society.

Reference

- 1)

- 2)

- 3)

- 4)

Author’s Profile

Manager

Holds concurrent posts in the Digital Integration Division,

Digital Business Infrastructure Division,

and Financial Systems Division

Cabinet Office, Government of Japan: Social Principles of Human-Centric Artificial Intellengence(AI), March 2019

Cabinet Office, Government of Japan: Social Principles of Human-Centric Artificial Intellengence(AI), March 2019