Global Site

Breadcrumb navigation

Small data learning technology for deep learning enables highly accurate learning

Featured TechnologiesAugust 19, 2019

Small data learning technology is expected to have a significant effect on the promotion of deep learning. We spoke with a researcher about the features of this technology and why it is required.

Significantly reducing the lack of data, which has long been a bottleneck in deep learning utilization

Doctor of Science

Research Fellow

Biometrics Research Laboratories

― What kind of technology is small data learning?

Recently, deep learning has become the object of the world’s attention, and is utilized in a variety of situations. However, a large amount of data is required to produce sufficient results and accuracy with deep learning. NEC personnel visit customers’ sites to provide support, and we have encountered many cases in which the amount of data required has become a bottleneck preventing implementation of this technology. It is possible to increase the amount of data used for learning when there is a physical model and an environment conducive to enacting highly accurate simulations, but there is no such model in most actual business situations. For example, suppose you are looking to incorporate deep learning in product inspection at your factory. Even if you are able to collect a sufficient amount of quality product data, there will typically be a significant lack of defective product data, ultimately leaving you without enough data for learning. Of course, it is quite difficult to simulate defective product data using a physical model, since such data can result from a multitude of causes. Is it possible to enact highly accurate learning with only a small amount of data? This is an issue researchers the world over have been grappling with intently in recent years.

Our small data learning technology was developed to address issues such as this. We have confirmed that accurate learning is possible even with only half the amount of data required by conventional methods. Up to this point, such methods as data augmentation and adversarial example generation have been researched around the world, but this technology takes a different approach, being designed for more efficient learning.

A unique approach focused on the middle layers, efficiently generating data that contributes to accuracy

― What kind of system is it?

Specifically speaking, we utilized the characteristics of a deep-layered structure for deep learning (a deep neural network) to develop a method for artificially generating data from the middle layers. In a deep neural network, data is aggregated into symbols with meaning as it moves from the input layer to the output layer. For example, in character recognition, when large amounts of data like the numerals 4 and 9 are collected at the input layer, they are simply registered as black and white image patterns. Even when the symbols are the same, they are not recognized as such by the system at this point, and are therefore distributed as separate data. The network learns while proceeding to the output layer and aggregating a variety of data along the way, eventually recognizing the data as collections of symbols, with some being pattern 4 and some being pattern 9.

With a deep neural network, conventional data augmentation and adversarial example generation involved applying changes to the data in the input layer to augment it. However, this method does not ensure the generation of data that will improve accuracy. Since the input data possess no meaning, this approach has a tendency to generate data that does not actually exist or which does not contribute to accuracy. For example, data in which 4 and 9 overlap, or which contain meaningless noise, are unlikely to actually exist, and simply applying pseudo rotations or changing the size is not enough to make it significantly different from the already existing data, so it does not really contribute to accuracy.

By contrast, if augmented data is generated in the middle layers, it can be generated in a state nearing meaningful symbols recognized by people, such as 4 or 9, making the generation of data with more meaning possible. In addition, the data is generated so that the network output differs from the correct answer, which makes recognition more difficult. Thus, the ability to generate large amounts of data that possess meaning but cannot be easily recognized contributes significantly to improving accuracy. We have confirmed that by using this technology, handwritten character recognition and object recognition experiments can be executed for accurate learning even with only half the amount of data required by conventional methods.

Our research paper summarizing the technology was presented at the July 2019 International Joint Conference on Neural Networks (IJCNN).

Universal technology that can be utilized with any type of data, including images and audio

― Can this technology be utilized for character recognition and image recognition?

It’s true that in our experiments we have only confirmed the effectiveness of this technology with handwritten digit recognition and general object recognition. However, this technology is designed for applying changes to the output at the middle layers, so it can be used universally with any type of data input. For example, even when using audio data instead of images, a similar system can be used to increase the data.

With the conventional approach of augmenting data at the input layer, it was necessary to design a system based on how experts in the domain of that system’s data type would go about increasing data. But with this technology, changes are not being applied to the data itself. Data is automatically generated inside the network, so the same system can be used regardless of the characteristics of the data. We believe that systems utilizing deep neural networks will be applicable not only for character recognition and image recognition, but in a wide range of situations.

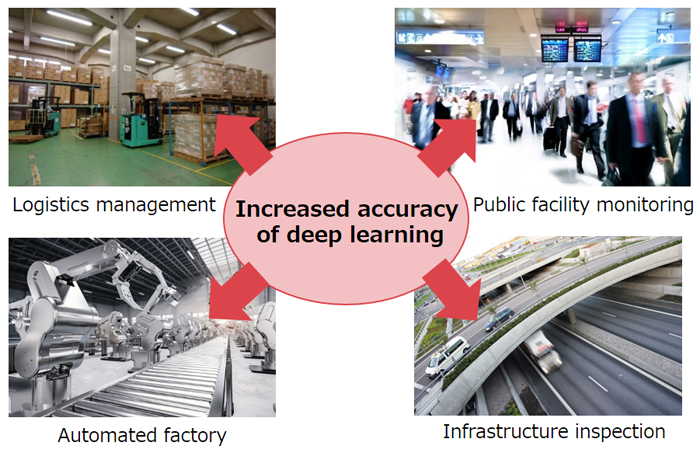

― What kinds of applications come to mind?

We think it can be applied to visual inspection of products, infrastructure maintenance, and many other situations requiring the use of deep learning. We want to demonstrate that this technology can now be used effectively with a variety of data, so we intend to collaborate with business divisions to consider even more possible applications.

- ※The information posted on this page is the information at the time of publication.