Global Site

Breadcrumb navigation

Achieving Significant Acceleration and Cost Reduction in Machine Learning

New Generation of Vector Processors Evolving Separately From GPUs

Featured Technologies October 25, 2017

A new choice has appeared among the machine learning processors that are essential to AI technologies. We spoke with Takuya Araki from the System Platforms Laboratories about the new generation of vector processors which achieve stunning processing speed increases and cost reductions in fields where the computational GPUs popular in recent years had difficulty producing results.

New generation of processors that excel at parallel processing

Principal Researcher

System Platforms Laboratories

― What are the features of the newly developed vector processors?

Vector calculation is an architecture with superior parallel processing for calculating all of the data at once. A long time ago, most supercomputers used a vector approach. Then the scalar type, which partitions the data for sequential processing, became mainstream, but around 2000 the processing speed of individual processors had already leveled off. Currently, the issue of how to perform parallel processing has shifted to an important phase. So once again, we have entered an era where the potential of vector computers, which excel at parallel processing, is being reconsidered. NEC has consistently believed in the potential of vector computers and continued development for a long time. That technology is now being applied in the current technology.

― How are they different from the computational GPUs that are used as high performance processors instead of CPUs?

GPUs are a type of hardware that focuses on packing in as many calculation units as possible to increase the "peak calculation performance." Therefore, they are an extremely effective processor for deep learning which requires high calculation performance. In particular, they are able to demonstrate massive power when handling data such as images and video.

In contrast, vector processors place an emphasis on "memory bandwidth" or how much data can be provided from memory to the calculation unit. Of course, the peak calculation performance is also high compared to CPUs, but the memory bandwidth is considerably higher than that. Providing as much data as possible from memory leaves no spare cycles for the calculation unit and allows the parallel processing to utilize the full performance.

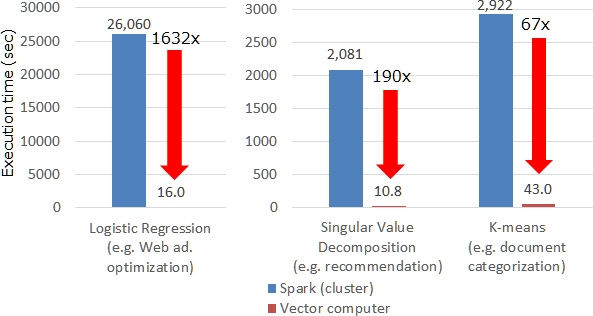

As a result, vector processors display extremely far-reaching benefits in machine learning areas outside of deep learning. For example, they will have a significant impact sooner than later in web ad optimization, EC site recommendation displays, news site categorization, and other areas where machine learning is already being utilized. If you are presently using a large scale cluster composed of a large number of servers to conduct machine learning, then you can definitely achieve an increase in speed and a reduction in cost.

Use Spark and Python without modifications

― Can they be linked to existing machine learning systems?

If you are a user of Spark (Note 1), which is typically used in machine learning today, then you can use them without modification. If you purchase and connect the products being announced now, we are developing middleware which will allow you to use them in the usual way from Spark, so you won't be aware of any hardware differences.

In addition, for Python (Note 2) we have also accommodated the scikit-learn (Note 3) interface, so you don't have to worry about any special settings or operations and can use it like always.

Eliminating waste and accelerating sparse matrix processing

― How has the processing efficiency of the vector calculation been improved?

The processing efficiency has been significantly improved due to two breakthroughs focusing on the characteristics of the "sparse matrix (Note 4)" which is often seen in large scale data handled by machine learning.

The first breakthrough is the "hybrid format" which divides the processing by the column and row to fully utilize the performance of the calculation unit and keeps the processing throughput at a high level. Indeed, the intrinsic level of performance is displayed by maintaining a high level of processing throughput in the vector calculation carrying out parallel processing all at once. However, wasted processing often occurred due to the sparse matrices with processing throughput deviations that are often handled in machine learning. In the newly developed technology, we figured out a way to divide the processing by the row and column to maintain a high level of processing throughput for each cycle.

While the processing within one processor accelerated due to the development of the hybrid format, another important factor is the communication for each processor. Because the arithmetically derived values are organized and then distributed to each processor to be calculated again in a process that is repeated many times until the learning converges, the communication speed is an extremely important factor in machine learning.

In the newly developed technology, we focused on the fact that there is no need to transmit columns without values in the sparse matrix for the corresponding result and only transmit the columns where values exist. As a result, we were able to reduce the communication volume for some data from 100 MB to 5 MB for one cycle.

Approaching supercomputer levels of performance

― Who are your target customers?

Vector computers are an architecture which was previously developed in the supercomputer field. However, as a result of this new technology, tower and rack mounted versions have also been added to the hardware product lineup. I believe that they can contribute to improving processing speed and reducing cost and space not only for customers who are already using NEC's SX-ACE vector supercomputer but also those customers who are currently building large scale clusters to engage in machine learning. For example, they can provide constructive proposals such as timely recommendations in a company running an EC site or optimized and efficient ad displays in a company handling web ads.

Furthermore, they are now available in an affordable price range for customers who were previously unable to implement vector computers due to the high cost. I believe that we have developed a solution that can be offered as a new alternative in high-speed processing computers, so customers should feel free to contact us.

- (Note 1)An open source distributed processing platform developed and published by the Apache Software Foundation.

- (Note 2)A programming language that is often used for data analysis, etc.

- (Note 3)An open source Python machine learning library.

- (Note 4)A matrix with values in only some elements while others are zero. For example, user ratings at an EC site might concentrate on the popular products while many products have no ratings at all.

- ※The information posted on this page is the information at the time of publication.

Related Link

- October 25, 2017 Press Releases

NEC releases new high-end HPC product line, SX-Aurora TSUBASA

- New computing platform expands the horizons of supercomputing, Artificial Intelligence and Big Data analytics - - July 3, 2017 Press Releases

NEC accelerates machine learning for vector computers

- Processing speed increased by more than 50 times -