Global Site

Breadcrumb navigation

Contributing to the spread of local 5G networks through the automation of network operations Learning-based communications analysis technology

Featured TechnologiesMarch 13, 2020

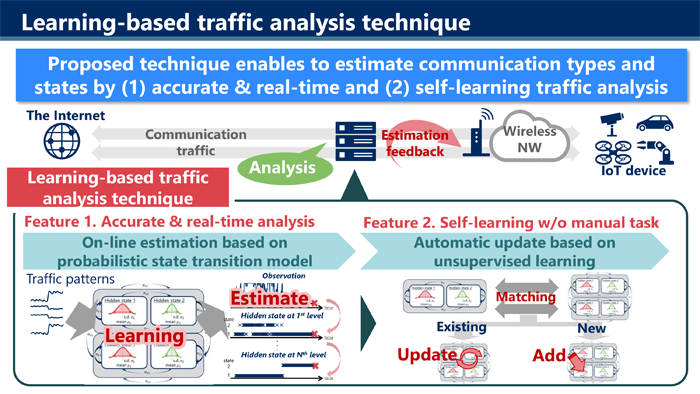

Network operations previously required analysis by experts, but NEC has developed the technology to automate the process. Local 5G networks will also need to be made private in order to fully utilize their capabilities and this technology will be able to automate network operations required. We interviewed the researchers of this technology.

The world’s first network operations automation technology which can improve the performance of networks

Research Manager

System Platform Research Laboratories

― What kind of technology is the learning-based communications analysis technology?

Iwai: Careful and periodic analysis by multiple experts has always been essential to increase the performance of mobile networks such as 5G and LTE. That’s because the communications traffic of each individual application has to be tracked and assigned a priority, bandwidth limits have to be set, and regular adjustments have to made to a network. However, the use of learning-based communications analysis technology makes it possible to automate the analysis process. This technology allows for the automatic analysis of things like the communications traffic patterns of a wireless network and can determine in real time how applications are being used. Using smartphones as an example, the technology can use communications traffic patterns to accurately determine in real time what an application is being used for, such as browsing the Internet or watching a video. Also, when it comes to IP cameras, the technology can use the communications traffic to determine if anyone is on camera as well as determine if there is more than one individual.

Additionally, the technology has the capability to learn on its own without management by people. It can compare past data to the current situation to ensure that the parameters it uses are always up to date. The technology can also react flexibly to the daily changes in communications traffic patterns and continue to provide highly-accurate analysis. The closest existing self-optimizing technology would be SON (Self-Organizing Network) used in LTE base stations, but our technology is on a completely different level. And the reason for that is because while an SON uses an AI to learn from the radio wave patterns on a per day basis and then makes changes the following day, our learning-based communications analysis technology can make changes in real time. It’s capable of following traffic on a per millisecond basis and making updates.

With the current expansion of 5G network infrastructure throughout the world, the establishment of local 5G networks is also accelerating. Unlike networks provided by telecommunication service providers, local 5G networks are private networks organized and managed by corporations and municipalities. The establishment of private networks is gathering a lot of attention because private networks have reduced delays and increased security while also costing less in the long run because there is no need to pay monthly network fees.

I believe that our technology will play a major role in the expansion of local 5G networks. Our technology will eliminate the need to put together a team of experts required for running a private network while also making continued network operations easy even without special knowledge or expertise.

Sawabe: Within the realm of communications analysis research, autonomous learning technology is very new and there’s nothing in the world that compares to it. The thesis covering this technology is scheduled to be presented at the IEEE/IFIP Network Operations and Management Symposium (NOMS) 2020, one of the most difficult international conferences to have a paper accepted by and a core conference of the IEEE Communications Society.

The ability to learn autonomously and provide highly-accurate real-time analysis

Senior Researcher

System Platform Research Laboratories

― What allows the technology to provide highly-accurate predictions in real time?

Sawabe: In order to make this easier to understand, let me explain how the conventional methods developed. There have actually been very many different kinds of communications analysis technologies. Of these technologies, the most common approach used has been to analyze a series of communications patterns from start to finish, and then determine what sorts of applications or protocols were being used. However, the need to analyze the total flow of communications meant sacrificing the ability to analyze things in real time. So the next approach that was developed analyzed traffic within set periods of time. Parameters would have to be established first and analysis would begin after those parameters, such as after a set number of packets have been received or a set amount of time has passed, have been met. While this did alleviate the problem of being unable to provide real-time analysis, not only does wireless quality fluctuate heavily, it also varies widely depending on the period of time. This made any predictions somewhat unreliable. As you can see, conventional technologies have been unable to simultaneously conduct analysis in real time and provide accurate predictions.

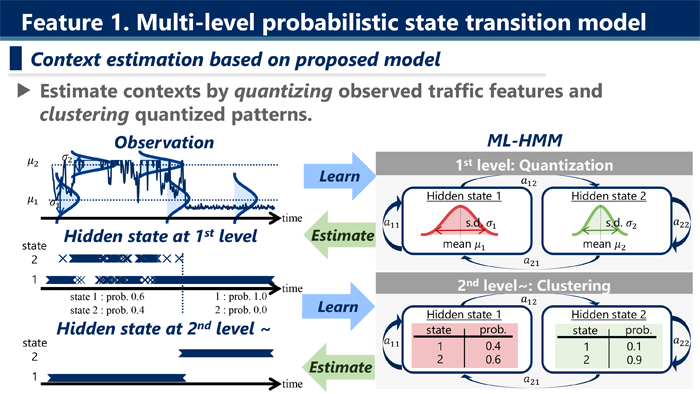

We solved this problem with the technology that we developed by creating a “multi-stage probability state transition model.” “Multi-stage” refers to how analysis is conducted over two main stages. The first stage is where quantification takes place. Communications traffic patterns are difficult to place into simple categories. This is because of the presence of continuous and unstable variables such as the amount of data transferred and the intervals of time. Attempting to perform an analysis with those variables as is would cause the model to constantly become bloated and complicated, leading to an increase in the number of calculations required. This reduces the analysis speed and is one of the primary factors preventing real-time analysis. That’s why we implemented a process which quantifies the continuously fluctuating variable of a time-series as a discrete fluctuating variable instead.

The technology uses these discrete variables to find similar patterns, learns from them, and then models them as contexts. This is the second stage that we call clustering. A context is the categorization of an end result that has been reached based on a given purpose. Using smartphone apps as an example, it represents the inferred answer to the question of whether an app is being used to browse the Internet or watch a video. Because the amount of unwanted information has been reduced during the previous quantification stage, highly-accurate predictions can be made quickly during the clustering stage. In fact, predictions can be made just 10 milliseconds after observing the characteristics of network traffic.

Iwai: One of the key points was identifying that there are actually two separate issues that have become jumbled together. By using quantification to create visual representations of the changes in a communication itself and the unwanted information, we could remove those factors, and then we could find similar patterns to cluster together. Being able to separate the two issues was one of the major breakthroughs for us. The conventional and normal approach was to tackle these two issues all at once without separating them. It was the mainstream approach in the industry to have the technology learn from a large pool of teaching data and then attempt to solve issues. However, this approach creates a dependency on the content of the teaching data and makes it impossible to identify any universal properties. Applications that aren’t registered in the teaching data can’t be recognized, and it becomes impossible to react when tuning is performed by a mobile carrier or to an application.

Sawabe: I originally majored in communications and that’s what my research was all about, so I actually knew absolutely nothing about the field of data analysis. That’s why I read a lot of papers on data analysis and one of the major topics that I came across was time-series data. I discovered that there were a lot of interesting papers on the subject. The behaviors of time-series data within the field of communications can become very complex due to the behavior of applications and the quality of communication networks themselves. I was digging deeper in an attempt to figure out how to incorporate the methods used in data analysis, and as a result, I came to see that were in fact two separate issues.

Iwai: Yes. This technology is the result of the successful combination of our knowledge of data analysis and expertise in the communications field. Knowledge of data analysis alone would have been insufficient for this technology because of the need for specific knowledge relating to networks such as the presence of noise in communications and sudden disappearances due to packet loss. Conversely, this technology would also have been impossible with knowledge of communications alone. Sawabe is the kind of researcher that reads papers for a hobby and he’s very studious and always carries around papers on him. He brought a lot of knowledge from various fields to the table for us to discuss and that’s one of the things which allowed us to make a lot of progress.

― How does this technology achieve autonomous learning?

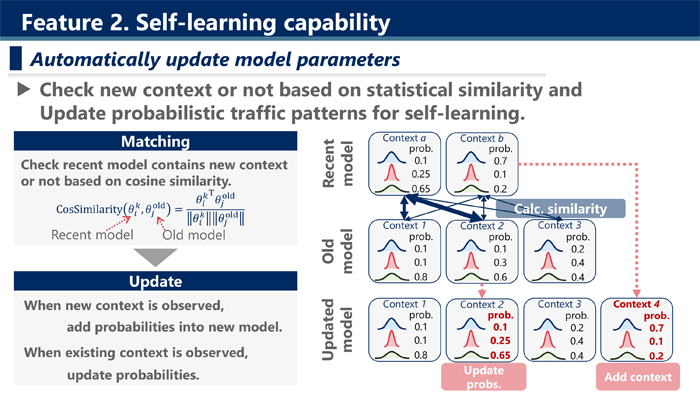

Sawabe: This technology analyzes communications and constructs models which it then compares to past models. By constantly attempting to determine if a pattern is new or not, it achieves autonomous learning. When a new pattern is created, it gets added to an existing model which then gets updated. Since it doesn’t rely on preset teaching data, it can evolve and learn autonomously. That allows it to respond flexibly to changes to an application or communications conditions.

This is where the aforementioned multi-stage analysis approach pays off. And that’s because models that result from this type of analysis is readable. In other words, the technology can look at a model and see the steps used during analysis to arrive at the model. That makes it easy to determine what parts of a model need to be changed or adjusted whenever changes in the environment occur. If we had used deep learning, which is currently the most popular approach in data analysis, to construct models, then it would be extremely difficult to update models in real time due to the difficulty of unraveling the complex steps used during calculations.

Aiding in the realization of smart cities and self-driving vehicles

― In what ways do you hope to further develop this technology?

Iwai: I believe this technology can be of use in a large variety of situations. For example, this technology can be utilized in any location looking to establish a local 5G network or IoT, such as stadiums and stations where videos are being streamed or factories and logistics warehouses where transport robots (AGV) are operated. We intend to focus especially on research relating to the use of this technology in scenarios where the need for even greater accuracy and the ability to respond in real time will be required. Specifically, we have our sights set on the communications control systems of crossroads which will be essential for the safe operation of self-driving vehicles and will promote their use. We’re doing research in the hopes of further developing our learning-based data analysis technology in order to make the leap to learning-based control technology.

I also see the building of networks for individual traffic systems, factories, and stores as something that will eventually lead to the establishment of a smart city. One of the projects which NEC is currently backing heavily is the creation of platforms for smart cities, so I’m also hoping the technology can be used for communications systems that can assist in the operation of an entire city.

Sawabe: I’ll be speaking strictly as a researcher, but I’m hoping to develop this technology further so that in the end, it will be possible to create networks that merely have to be set up and will automatically optimize themselves from there. While it’s true that Wi-Fi routers behave similarly and handle network authentication and connections, devices which are self-managing and can automatically optimize the communication quality based on the environment do not exist yet. One of my big goals is to create such a device using private mobile networks.

Iwai: Yes. Wi-Fi is fast, but there’s no telling when you’ll lose your connection. Mobile networks are reliable, but they’re hard to set up and it’s difficult to use them under the technically demanding environment. If the kind of technology that Sawabe is talking about can be developed, then it can lead to the creation of a world where self-driving vehicles will be accident free. I hope to focus our research on technology which can lead to the creation of such a society.

- ※The information posted on this page is the information at the time of publication.